In today’s hyper-connected world, speed matters more than ever. From streaming services to smart homes and autonomous vehicles, modern technology demands real-time processing. This is where edge computing explained becomes essential for non-tech users who want to understand how data moves and gets processed. Instead of relying entirely on distant cloud servers, edge systems process information closer to where it’s generated. Understanding edge computing basics helps individuals and businesses see why this shift is happening. When comparing cloud vs edge, the main difference lies in speed, latency, and efficiency. As digital transformation accelerates in 2026, having edge computing explained clearly can help everyone grasp why it plays such a crucial role in everyday technology.

What Is Edge Computing and Why It Matters

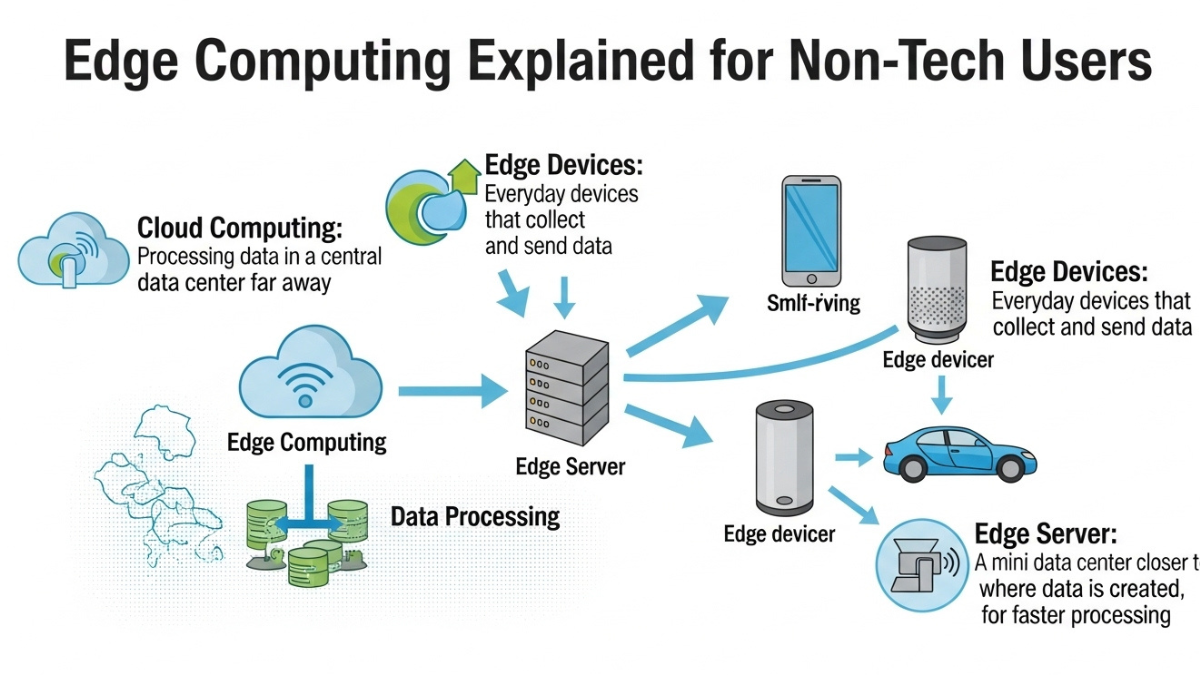

To put it simply, edge computing explained refers to processing data near the source—like a smartphone, IoT device, or local server—instead of sending everything to centralized cloud data centers. Learning edge computing basics shows that this approach reduces latency and improves performance, especially for applications requiring instant decisions.

For example, in a self-driving car, waiting for data to travel to the cloud and back could cause dangerous delays. This is where understanding cloud vs edge becomes important. While cloud computing centralizes data storage and processing, edge computing distributes it across local devices.

Key reasons why edge computing explained is gaining popularity:

- Faster response times

- Reduced bandwidth usage

- Improved data privacy

- Better reliability in remote areas

- Lower operational costs in certain use cases

When exploring edge computing basics, it becomes clear that industries like healthcare, manufacturing, and retail are adopting this model rapidly. The comparison of cloud vs edge shows that edge computing complements cloud systems rather than replacing them entirely.

Edge Computing Basics for Everyday Users

To truly understand edge computing explained, it’s important to break down edge computing basics into practical terms. Imagine using a smart thermostat. Instead of sending every temperature reading to a cloud server, the device processes adjustments locally. This speeds up reactions and improves efficiency.

Here are some real-life examples where edge computing explained makes sense:

- Smart security cameras analyzing footage locally

- Wearable health devices processing heart rate instantly

- Online gaming reducing lag through local servers

- Smart factories detecting equipment failures in real time

When discussing cloud vs edge, cloud systems are still useful for storing large datasets and long-term analytics. However, edge computing basics highlight that real-time processing benefits significantly from local computation.

Below is a comparison table to better understand cloud vs edge:

| Feature | Cloud Computing | Edge Computing |

|---|---|---|

| Data Processing Location | Centralized data centers | Near the data source |

| Latency | Higher latency | Low latency |

| Bandwidth Usage | High | Reduced |

| Scalability | Highly scalable | Limited but improving |

| Ideal Use Cases | Data storage, analytics | Real-time applications |

Through this table, edge computing explained becomes easier to visualize. The distinction in cloud vs edge lies mainly in where and how data is processed.

Cloud vs Edge: Key Differences Simplified

When comparing cloud vs edge, many people assume one is better than the other. However, edge computing explained shows that both serve different purposes. Cloud computing is ideal for centralized control, backups, and advanced analytics. Edge computing, on the other hand, excels in speed-sensitive environments.

Understanding edge computing basics reveals that:

- Edge reduces latency by minimizing travel distance for data

- Cloud offers massive storage and computing power

- Edge improves performance in unstable internet areas

- Cloud supports global access and collaboration

The debate of cloud vs edge is not about replacement but collaboration. Modern IT infrastructure often combines both models in what is known as a hybrid system. In such systems, edge computing explained works alongside cloud solutions to create balanced performance and scalability.

For non-tech users, grasping edge computing basics simply means recognizing that some data is processed locally for speed, while other data is stored in the cloud for analysis.

Real-World Applications of Edge Computing in 2026

By 2026, edge computing explained is no longer just a technical concept—it’s part of everyday life. Industries are leveraging edge computing basics to enhance efficiency and customer experience. Retail stores use smart shelves that process inventory data locally. Hospitals rely on edge devices for real-time patient monitoring.

Here are industries benefiting from cloud vs edge integration:

- Healthcare: Real-time diagnostics

- Manufacturing: Predictive maintenance

- Transportation: Traffic monitoring systems

- Agriculture: Smart irrigation systems

- Smart Cities: Intelligent traffic lights

Understanding edge computing basics allows businesses to reduce delays and improve service reliability. When comparing cloud vs edge, it becomes clear that edge solutions are especially valuable in environments requiring immediate action.

As digital ecosystems expand, edge computing explained continues to evolve. Faster 5G networks, improved IoT devices, and decentralized architectures are pushing innovation forward.

Final Thought

Understanding edge computing explained helps non-tech users navigate today’s fast-moving digital landscape. By learning edge computing basics, it becomes clear how local data processing enhances speed, reliability, and privacy. The discussion around cloud vs edge shows that these technologies complement each other rather than compete. As innovation continues in 2026 and beyond, edge computing will play a foundational role in supporting smarter devices, efficient systems, and real-time digital experiences across industries.

FAQs

What does edge computing explained mean in simple terms?

Edge computing explained means processing data closer to where it is created instead of sending it to distant cloud servers, reducing delays and improving speed.

How are edge computing basics different from cloud computing?

Edge computing basics focus on local data processing, while cloud computing relies on centralized servers for storage and analysis.

Is cloud vs edge a competition?

No, cloud vs edge is not a competition. Both technologies often work together in hybrid systems to balance speed and scalability.

Why is edge computing important in 2026?

Edge computing is important in 2026 because real-time applications like IoT, smart cities, and autonomous systems require low latency and faster processing.

Click here to learn more